I recently had the opportunity to architect a solution consisting of 3 vSphere 5 boxes connecting to a NetApp FAS2040. Storage connectivity would be via iSCSI. The storage network would be running off of 2 Cisco 2960G switches, soon to be replaced by stacked Cisco 3750’s.

The requirements were stock standard, as high a throughput as possible, with as much redundancy as possible. This meant going active active on the iSCSI links. Here is how I did it.

NetApp FAS2040 Configuration

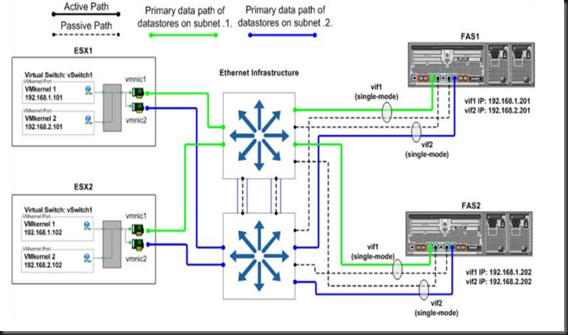

This little SAN has 8 1GB Ethernet ports. Due to the fact that the Cisco 2960G switches does not support multi-link switch aggregation (this is where the 3750’s will come in) I had to come up with a simpler design – what NetApp terms a Single-Mode design. My design allows for:

- Two active connections to each controller, thus a total of four active sessions

- Storage path HA

- Load balancing across links

- Uses vSphere storage MPIO as opposed to switch-side configuration

Virtual Interface (VIF) Configuration:

All Vif's are single-mode / active passive

Cont1_Vif01 - e0a/e0b (e0a will be active, connected to switch 1 / e0b passive connected to switch 2) IP – 192.168.1.1

Cont1_Vif02 - e0c/e0d (e0c will be active, connected to switch 2 / e0d passive connected to switch 1) IP – 192.168.2.1

Cont2_Vif01 - e0a/e0b (e0a will be passive, connected to switch 1 / e0b active connected to switch 2) IP – 192.168.1.2

Cont2_Vif02 - e0c/e0d (e0c will be passive, connected to switch 2 / e0d active connected to switch 1) IP – 192.168.2.2

This image, courtesy of NetApp, explains it infinitely better than my wall of text:-)

I also configured partner takeover for all VIF. In case of controller failure it allows the remaining controller to take over the VIFs.

Ethernet Storage Network Configuration

On the storage network I had to configure 2 critical settings:

- Spanning Tree Portfast

- Jumbo Frames

When connecting ESX and NetApp storage arrays to Ethernet storage networks, NetApp highly recommends configuring the Ethernet ports to which these systems connect as RSTP edge ports. This is done like so:

Switch2960(config)# interface gigabitethernet2/0/2

Switch2960(config-if)# spanning-tree portfast

Next up, Jumbo Frames:

Switch2960(config)# system mtu jumbo 9000

Switch2960(config)# exit

Switch2960# reload

vSphere Configuration

I am in love with vSphere 5, and one of the biggest reasons for that is the fact that a lot of the configuration parameters that used to be command-line only has been moved into the GUI. Another reason is Multiple TCP Session Support for iSCSI. This feature enables round robin load balancing using VMware native multipathing and requires a VMkernel port to be

defined for each physical adapter port assigned to iSCSI traffic. That said, let’s get configuring:

- Open your vCenter Serve

- Select an ESXi host

- In the right pane, click the Configuration tab

- In the Hardware box, select Networking

- In the upper-right corner, click Add Networking to open the Add Network wizard

- Select the VMkernel radio button and click Next

- Configure the VMkernel by providing the required network information. NetApp requires separate subnets for active/active iSCSI connections, therefore we will create two VMkernels, on the 192.168.1.x and 192.168.2.x subnets respectively.

- Configure each VMkernel to use a single active adapter that is not used by any other iSCSI VMkernel. Also, each VMkernel must not have any standby adapters. If using a single vSwitch, it is necessary to override the switch failover order for each VMkernel port used for iSCSI. There must be only one active vmnic, and all others should be assigned to unused

- The VMkernels created in the previous steps must be bound to the software iSCSI storage adapter. In the Hardware box for the selected ESXi server, select Storage Adapters.

- Right-click the iSCSI Software Adapter and select properties. The iSCSI Initiator Properties dialog box appears

- Click the Network Configuration tab

- In the top window, the VMkernel ports that are currently bound to the iSCSI software interface are listed

- To bind a new VMkernel port, click the Add button. A list of eligible VMkernel ports is displayed. If no eligible ports are displayed, make sure that the VMkernel ports have a 1:1 mapping to active vmnics as described earlier

- Select the desired VMkernel port and click OK.

- Click Close to close the dialog box

- At this point, the vSphere Client will recommend rescanning the iSCSI adapters. After doing this, go back into the Network Configuration tab to verify that the new VMkernel ports are shown as active, as per the image below.

Congratulations, you now have active / active, redundant iSCSI sessions into your NetApp SAN!

A friend linked me to this site. Thnx for the resources.

ReplyDeleteHave a look at my blog post : Computer Support Sydney